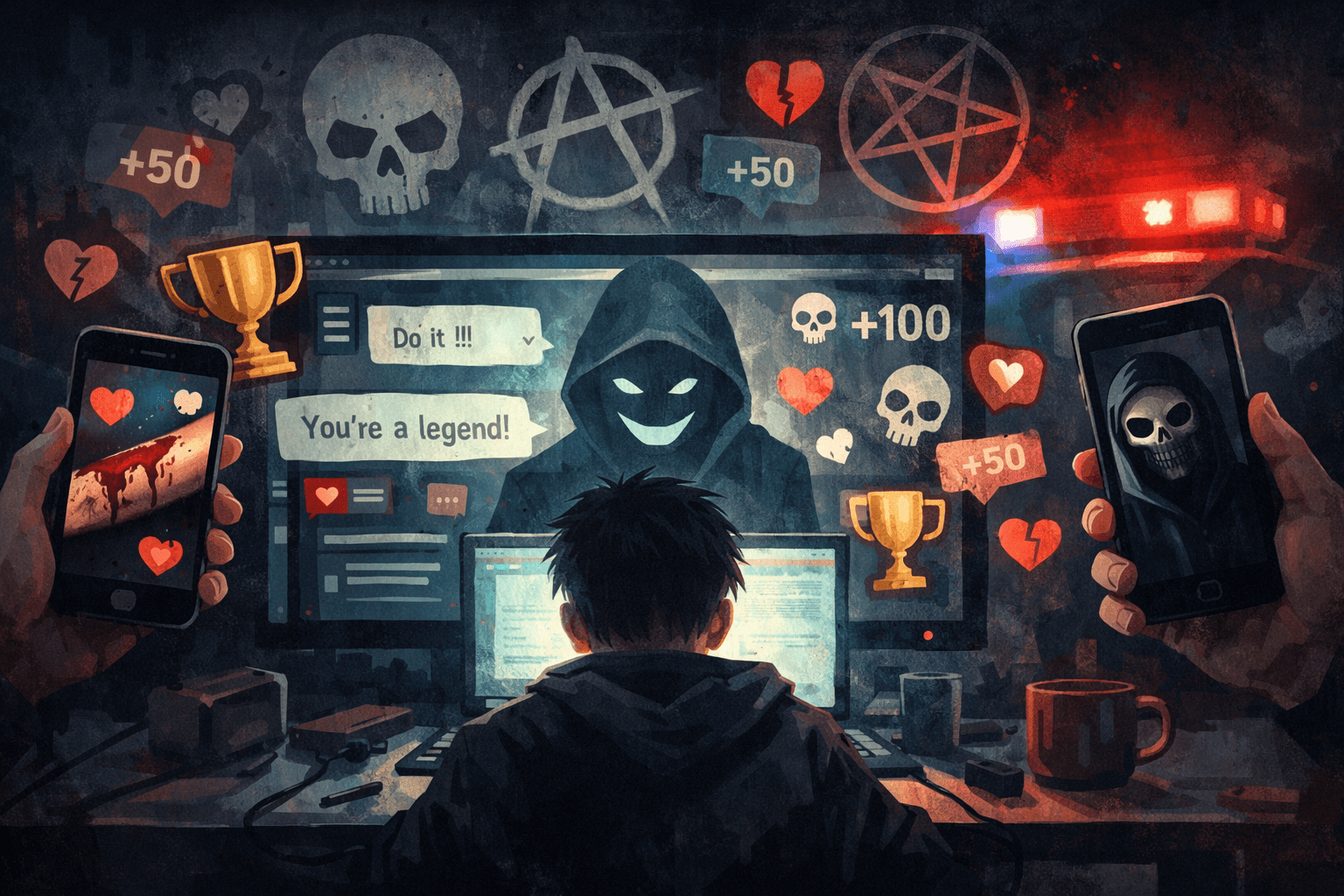

Children are being pulled into hidden online networks where cruelty, self-harm and abuse are treated as status symbols rather than warning signs.

Editor’s note

This piece discusses online child exploitation, coercion and self-harm content in a systems-focused way. We’ve avoided graphic detail. Our aim at Not Here Long ) is to interrogate the incentives and governance failures that make these harms scalable — not to amplify them.

Online safety is often framed as a question of screen time or inappropriate content. But recent reporting has highlighted something far more unsettling: loosely organised online networks where children and teenagers trade harmful material as a way to gain recognition, influence and belonging.

These groups — often referred to collectively as “the Com” — operate across platforms such as Telegram, Discord and gaming-adjacent spaces. They are fluid, decentralised and deliberately difficult to trace. What binds them together is not money or ideology, but status. Participants earn attention by sharing extreme content, including self-harm imagery, harassment campaigns and abuse directed at others.

For many young people, involvement begins in entirely ordinary digital spaces. Parents describe children drawn in through gaming communities or social apps, before being gradually exposed to darker material. Over time, violent memes, self-harm imagery and increasingly disturbing behaviour become normalised, reframed as humour, bonding or proof of loyalty.

Once inside these networks, vulnerability is often exploited. Young people struggling with mental health, identity or isolation may be encouraged to harm themselves or others under the guise of support or belonging. Abusive acts are presented as “challenges” or dares, turning harm into a competitive exercise. In some cases, this escalates into real-world consequences, including harassment campaigns, vandalism and false emergency call-outs known as “swatting”.

These spaces are not driven by a single extremist belief system. While far-right or occult symbols may appear, they are often used performatively rather than ideologically. Members move between overlapping networks, making intervention and enforcement difficult.

Parents who uncover their children’s involvement describe shock, guilt and helplessness. Some find that restricting device access and closely monitoring online activity can disrupt the cycle. But safeguarding experts argue that responsibility cannot rest with families alone.

When harm becomes a game, the line between performance and reality collapses fast.

Why this matters

This story isn’t really about “dark corners of the internet”. It’s about what happens when platforms reward attention at any cost, and children learn — early — that shock, cruelty and self-destruction are ways to be seen.

These groups don’t thrive because young people are uniquely broken or malicious. They thrive because status has replaced safety, and because online spaces are designed to amplify extremes while disclaiming responsibility for the consequences.

The most uncomfortable truth is this: these networks are not an anomaly. They are an exaggerated version of the same incentive structures that dominate mainstream platforms — visibility over wellbeing, engagement over care, virality over responsibility. Children are simply encountering those dynamics without the filters, defences or power to opt out.

Framing this as a parental failure or a fringe problem misses the point. This is a systemic issue, built into how digital communities form, how content is rewarded, and how little meaningful accountability exists when harm is distributed across platforms and borders.

The Limits of Regulation — and the Illusion of AI Fixes

Governments increasingly point to regulation and artificial intelligence as solutions. Online safety laws promise stronger enforcement, while platforms promote AI moderation as proof that harm can be detected and removed at scale. But this case exposes the limits of both.

Regulation struggles when abuse is decentralised, cross-platform and constantly mutating. Rules written for individual platforms are ill-suited to cultures that exist between them, migrating as soon as scrutiny increases. Enforcement becomes reactive rather than preventative, always one step behind.

AI moderation, meanwhile, is often deployed as a shield rather than a safeguard. Automated systems are trained to spot known patterns — not evolving social dynamics, coded language or the slow coercion of vulnerable users. Worse, these systems are frequently optimised to reduce platform liability, not to protect children. What gets removed is what is easiest to detect, not what is most damaging.

If harm is treated as a technical problem, it will receive technical fixes — imperfect, opaque and ultimately inadequate. What this moment demands is a deeper reckoning with platform design itself: how status is awarded, how visibility is engineered, and how responsibility is avoided when damage is diffuse.

Closing: Until regulation targets incentives rather than symptoms — and until AI is used to support accountable human judgement rather than replace it — stories like this will keep surfacing. Not as shocking exceptions, but as predictable outcomes of a digital environment that still treats harm as collateral rather than consequence.